Light Field SLAM

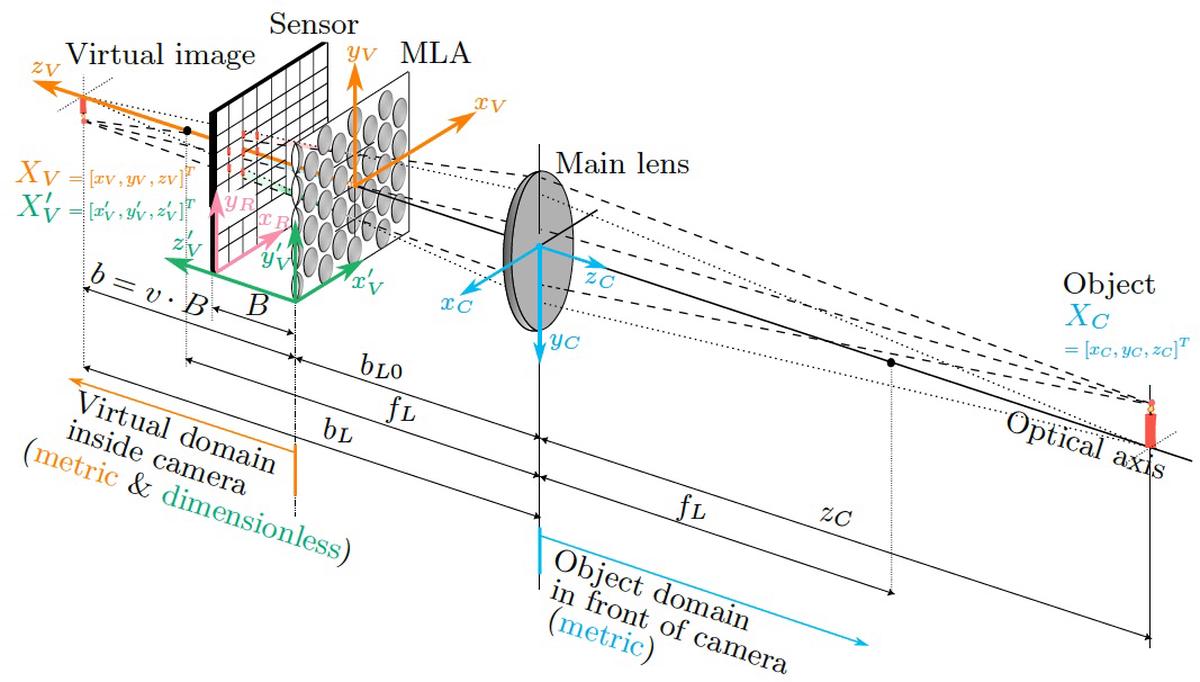

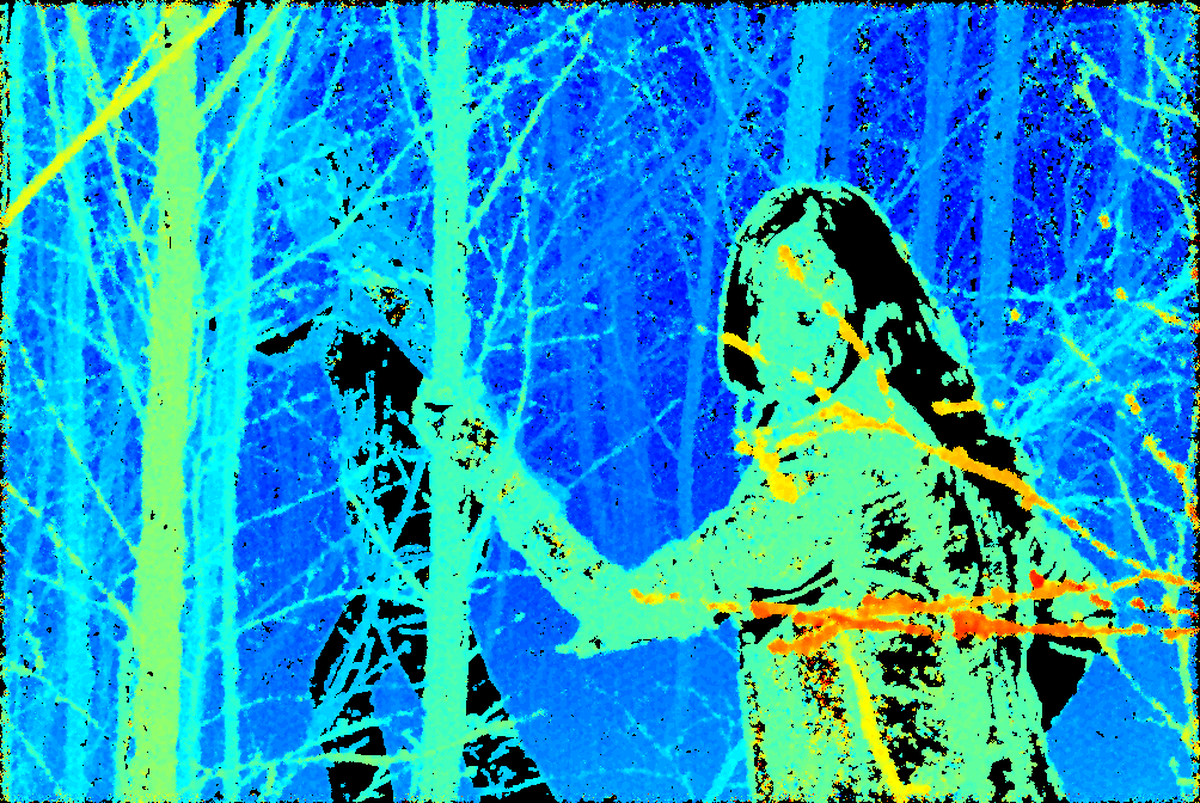

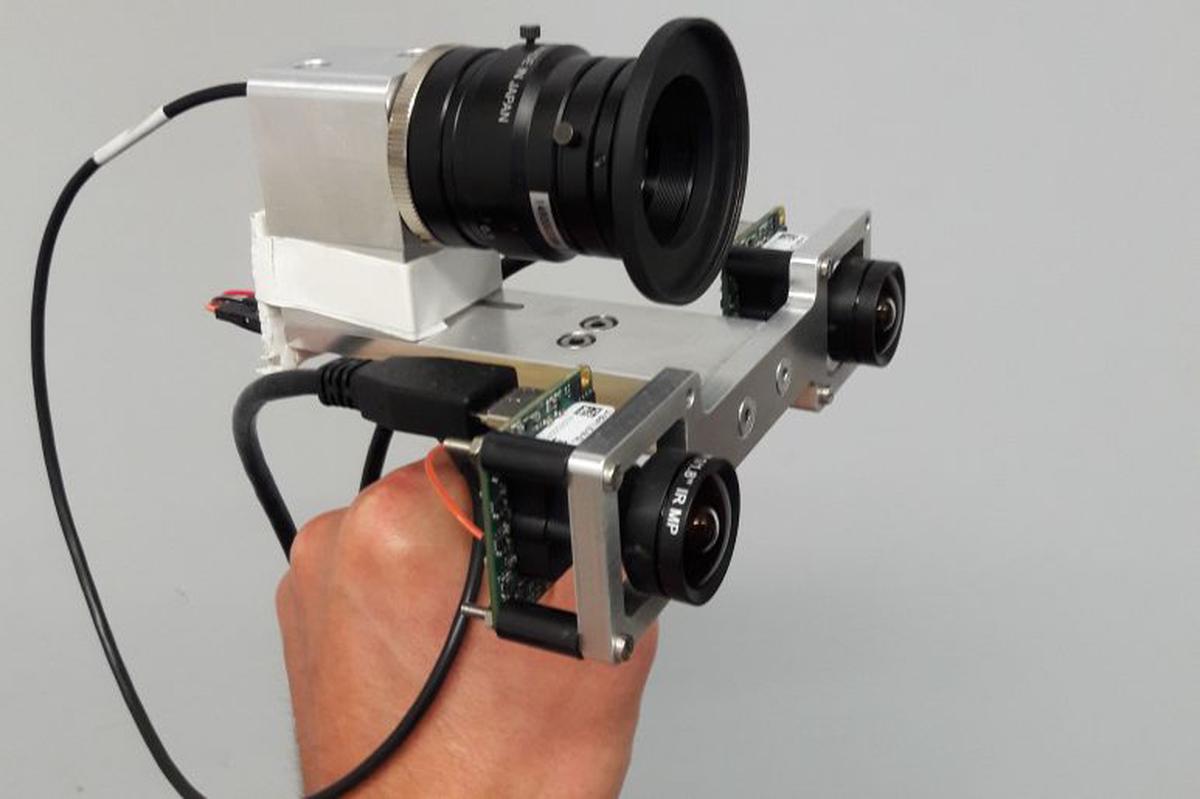

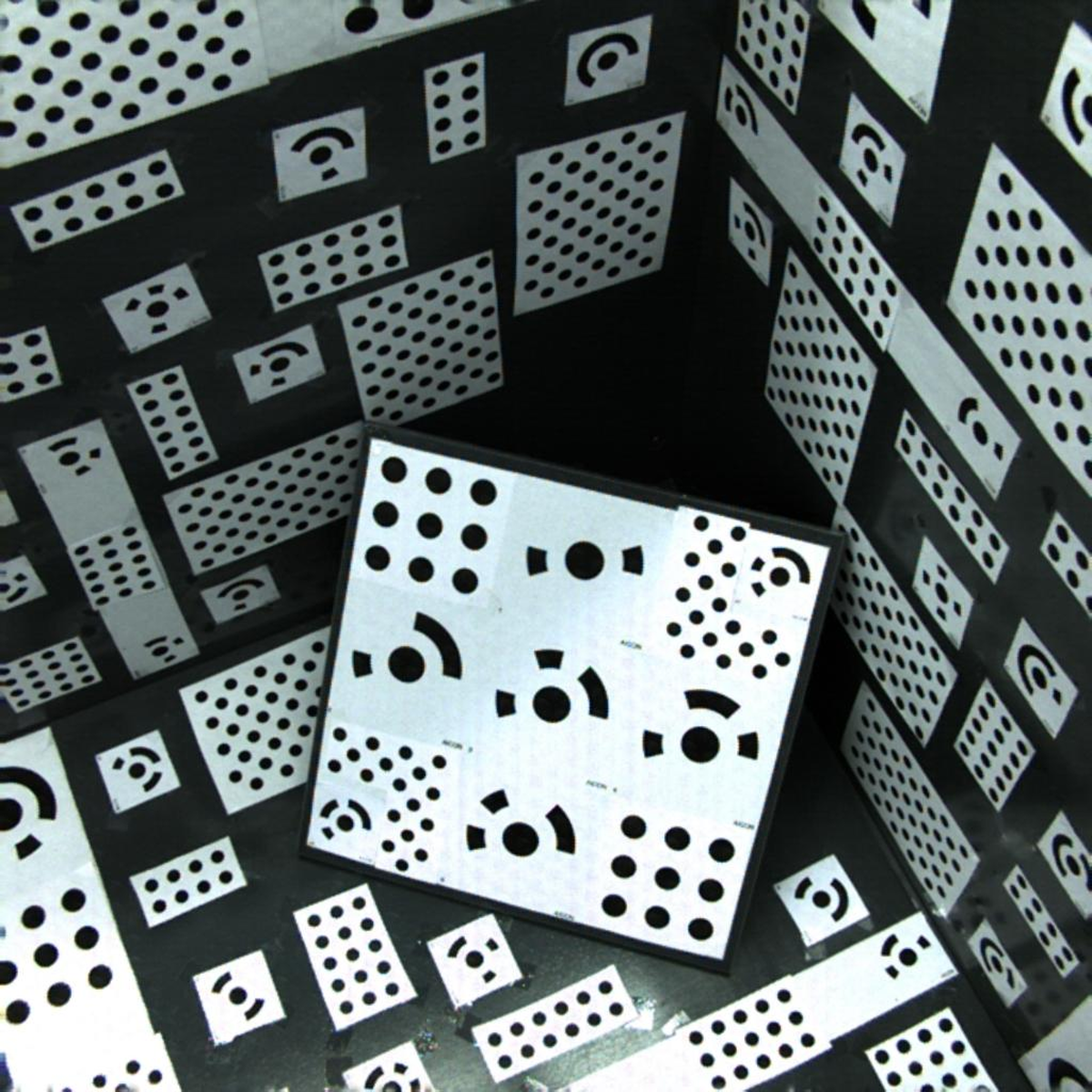

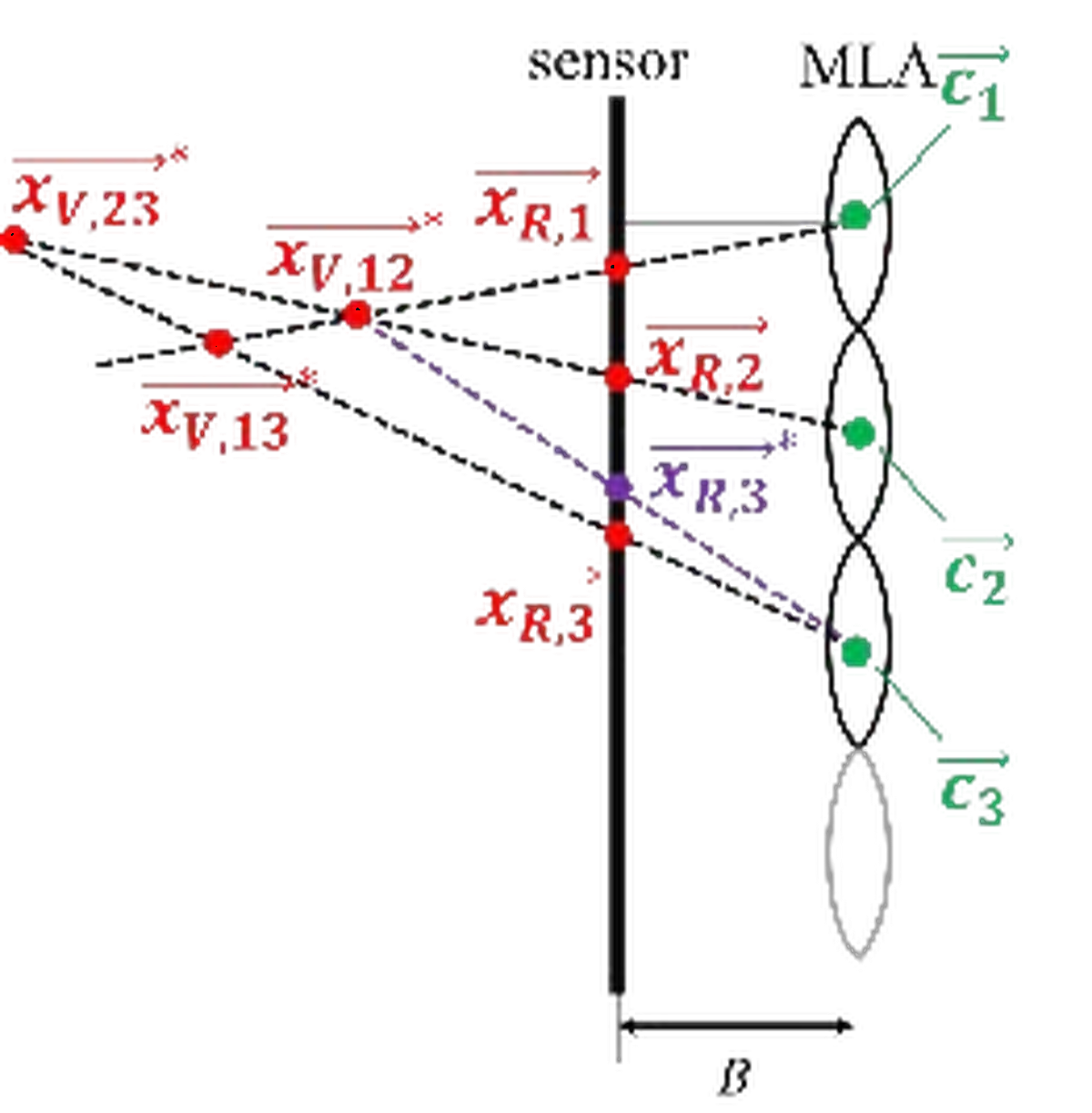

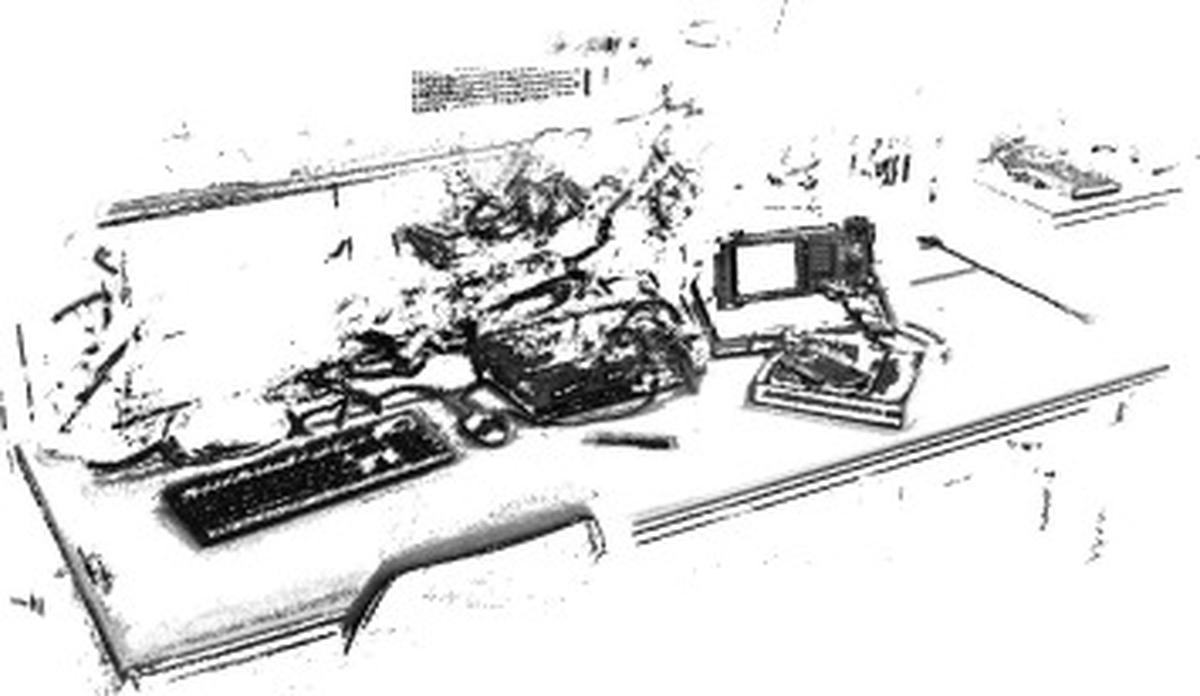

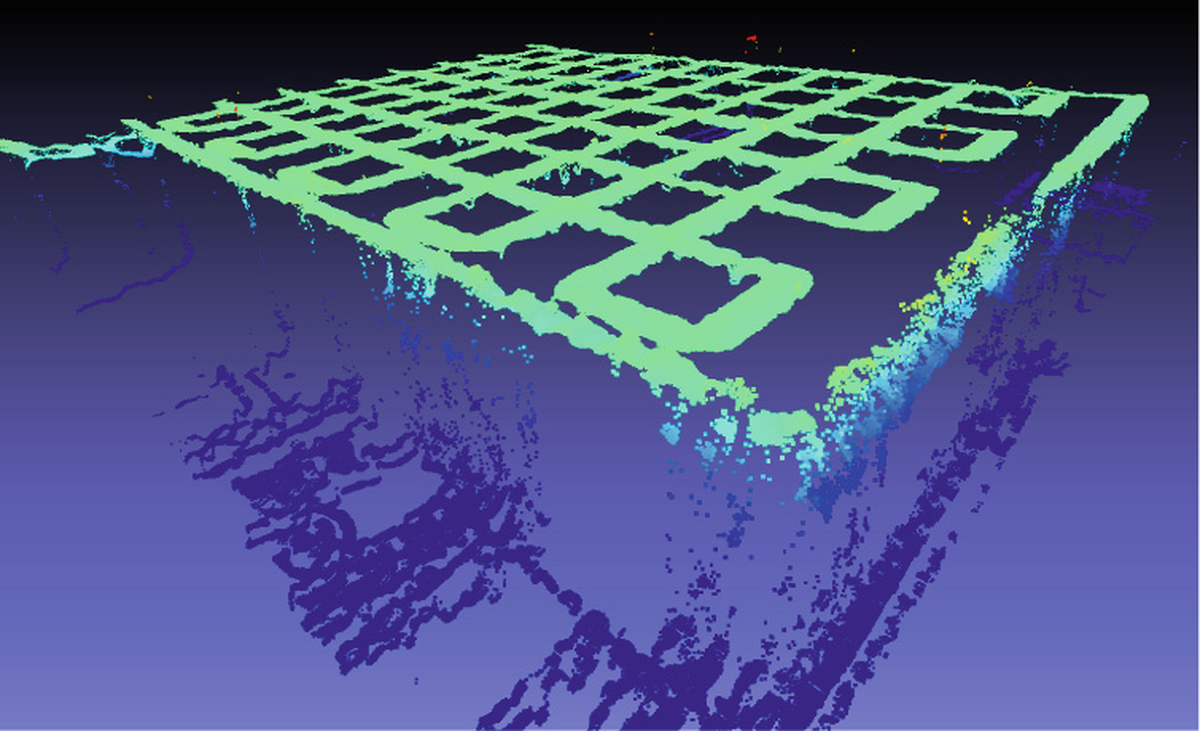

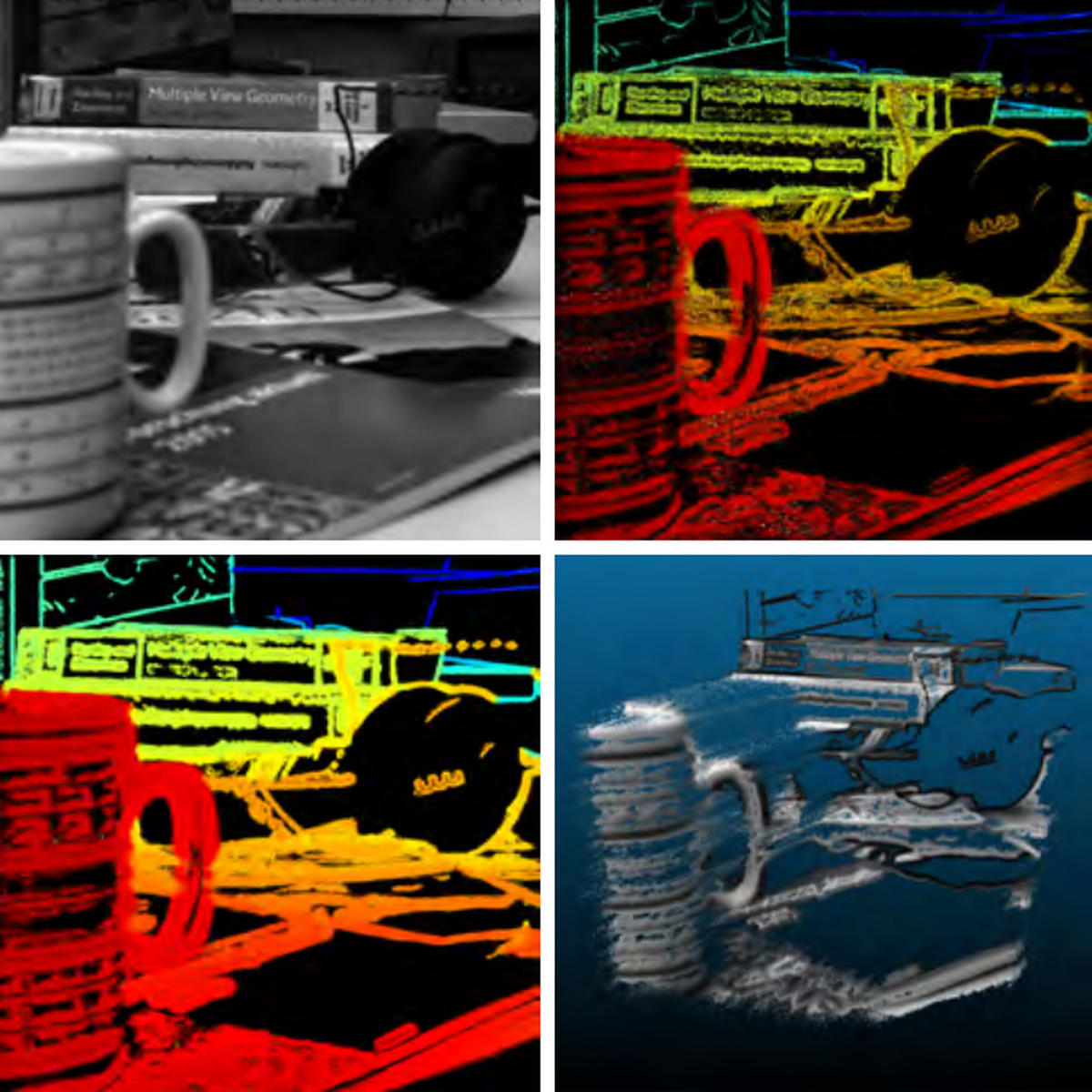

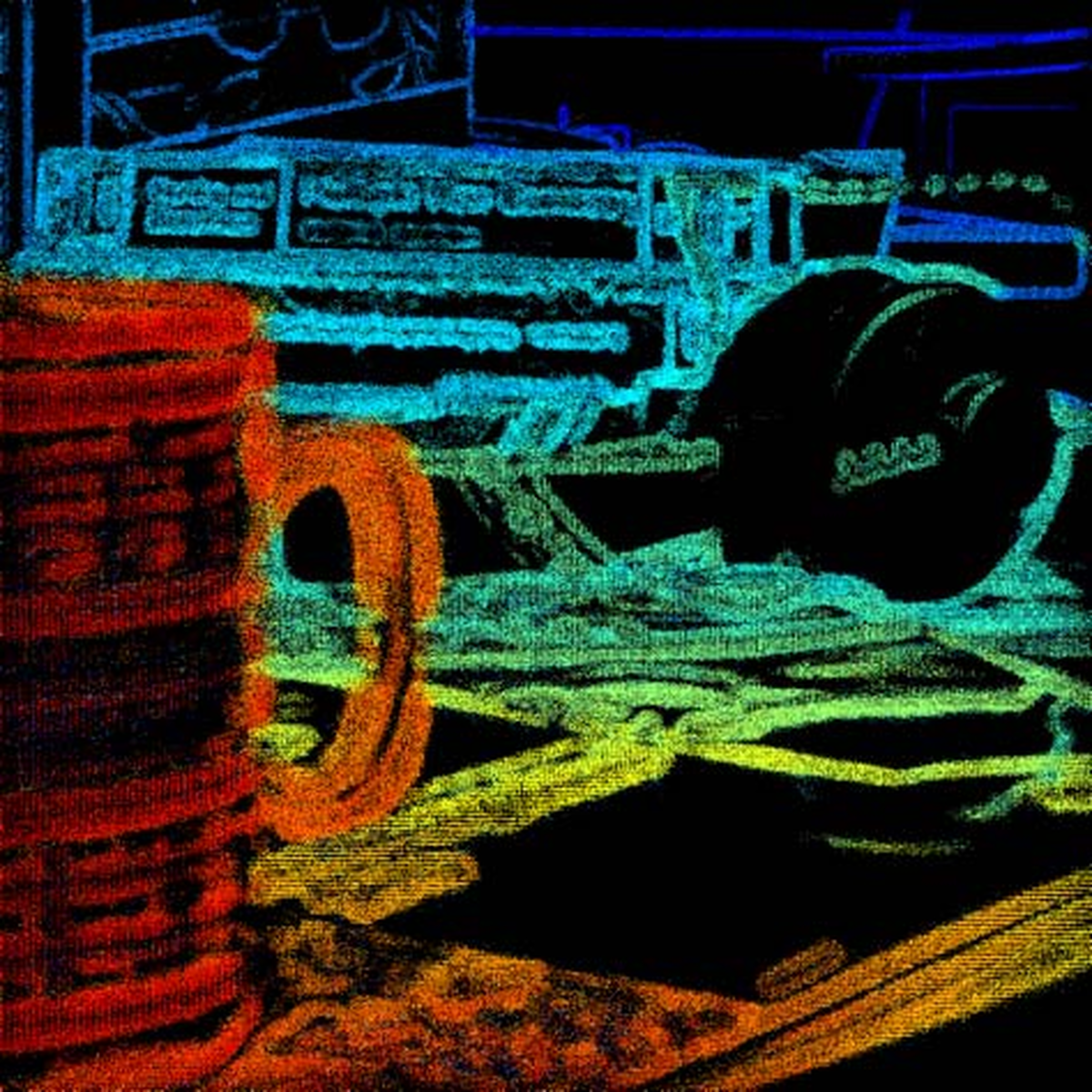

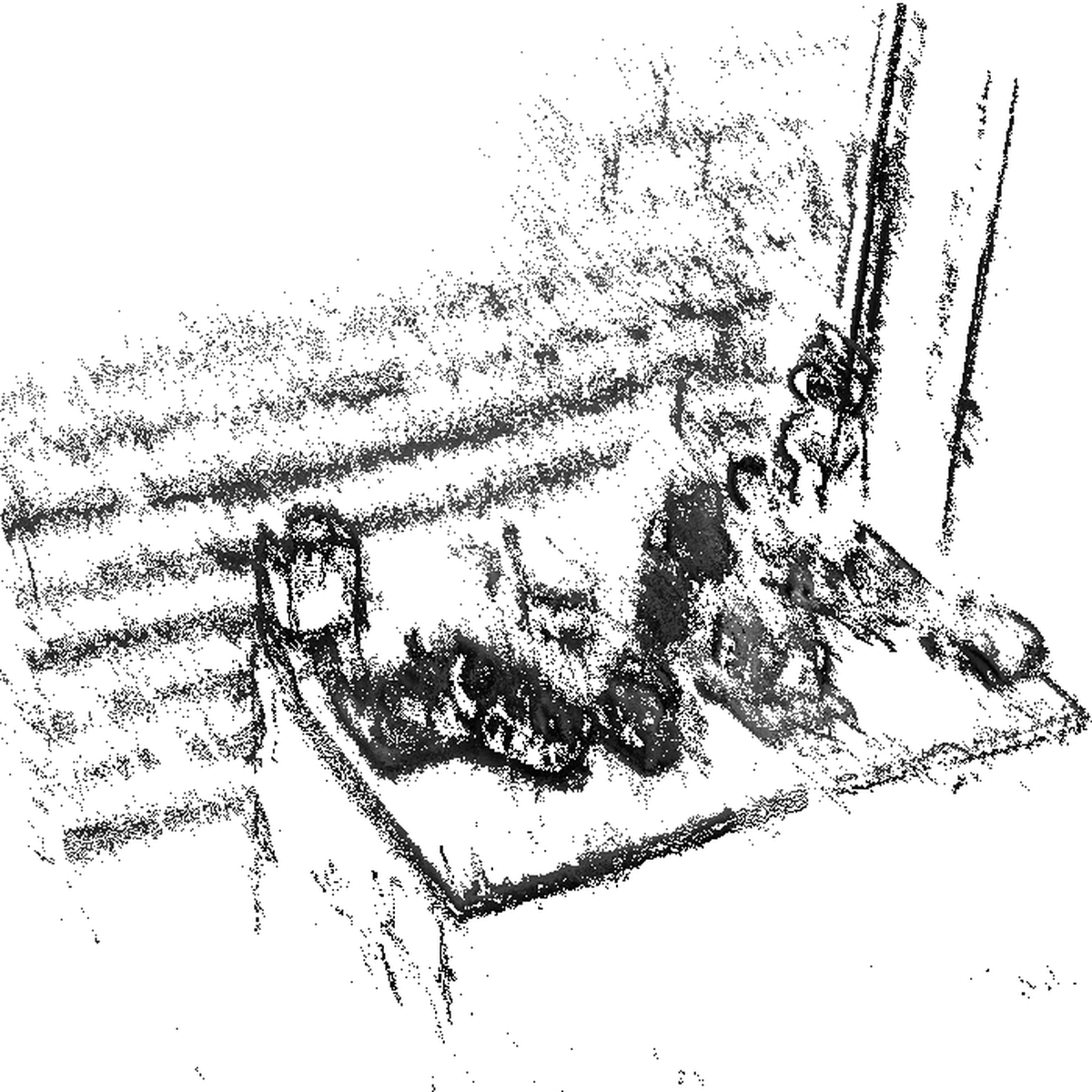

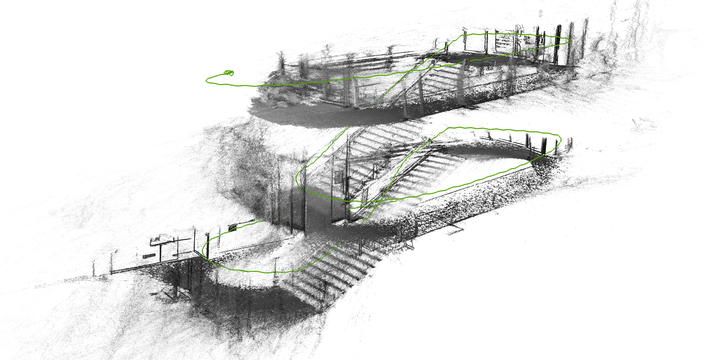

In contrast to standard cameras, light field (or plenoptic) cameras capture the light field of a scene in four dimensions (i.e. spatial and directional information).

Therefore, among others, one is able to obtain 3D information from a single recording of a light field camera.

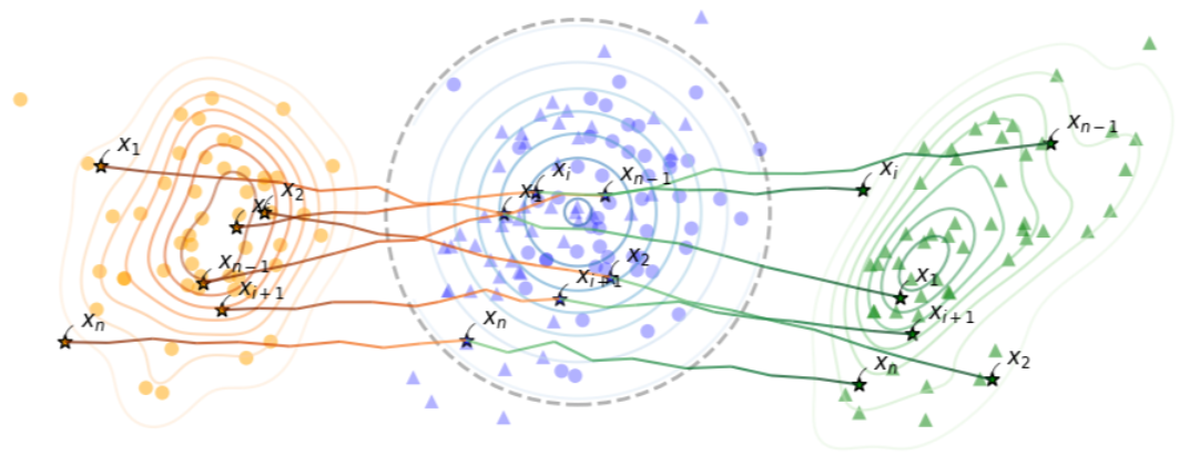

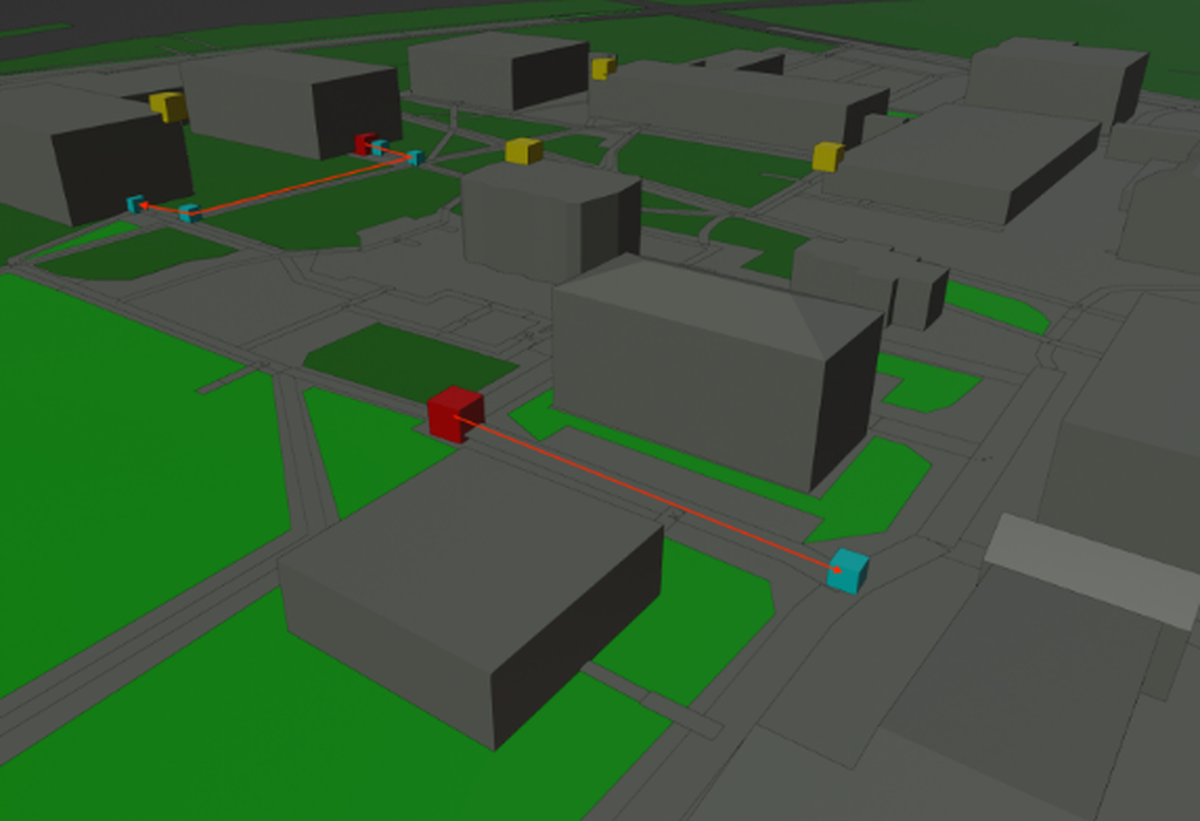

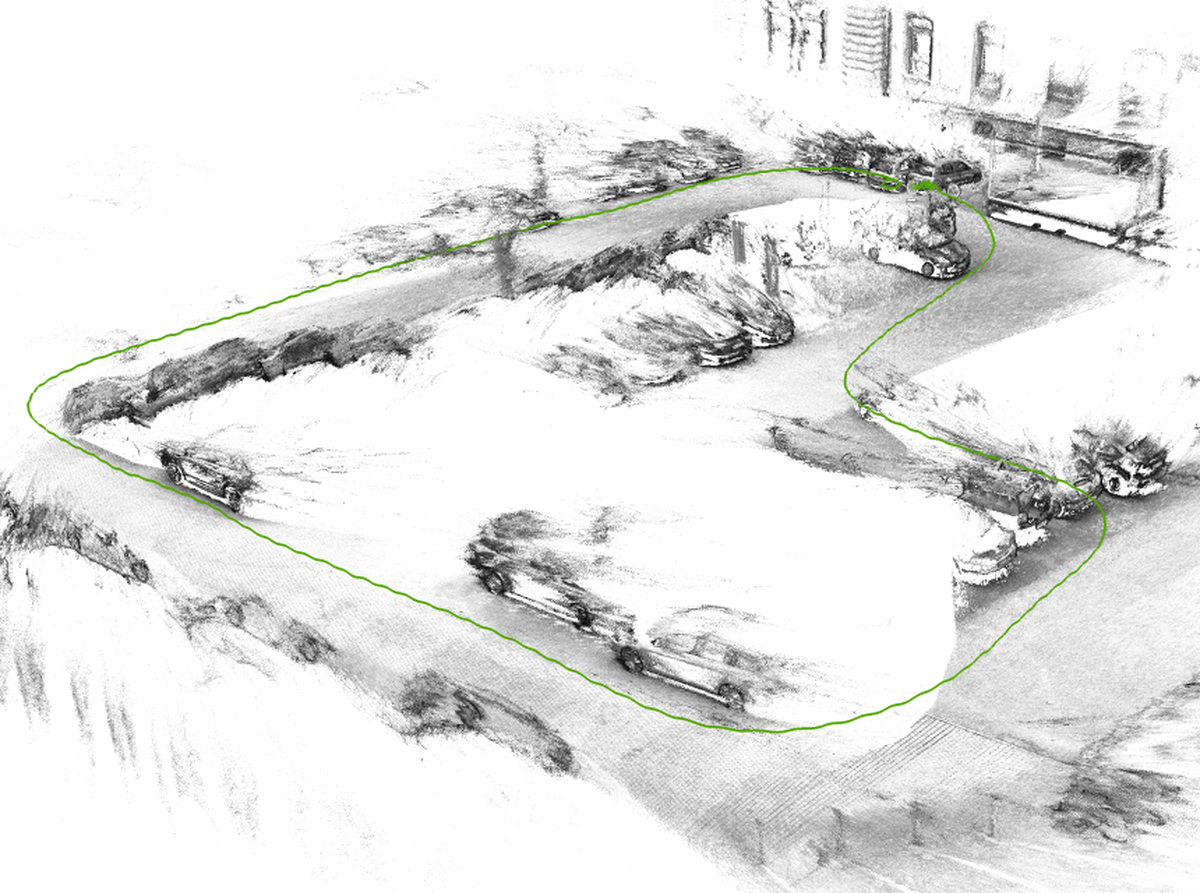

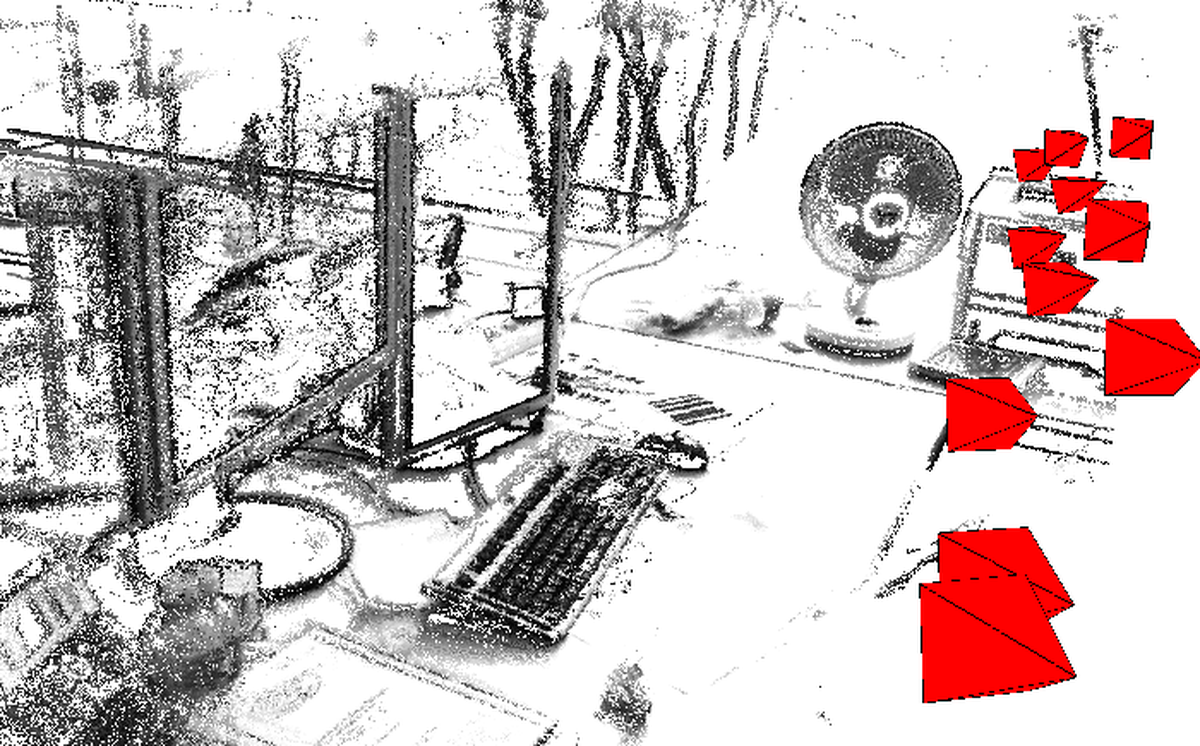

Light field camera based simultaneous localization and mapping (SLAM) focuses on the estimation of the camera trajectory as well as a 3D reconstruction of the environment based on an image sequence recorded by a light field camera. The 4D light field enables metric scale tracking and mapping based on a single camera.

Publications

TurnBack: A Geospatial Route Cognition Benchmark for Large Language Models through Reverse Route

Hongyi Luo, Qing Cheng, Daniel Matos, Hari Krishna Gadi, Yanfeng Zhang, Lu Liu, Yongliang Wang, Niclas Zeller, Daniel Cremers, Liqiu Meng

Conference on Empirical Methods in Natural Language Processing (EMNLP);

2025

Generating a Totally Focused Colour Image from Recordings of a Plenoptic Camera

Marcel Sütterlin, Niclas Zeller, Franz Quint

International Symposium on Electronics and Telecommunications (ISETC);

2018

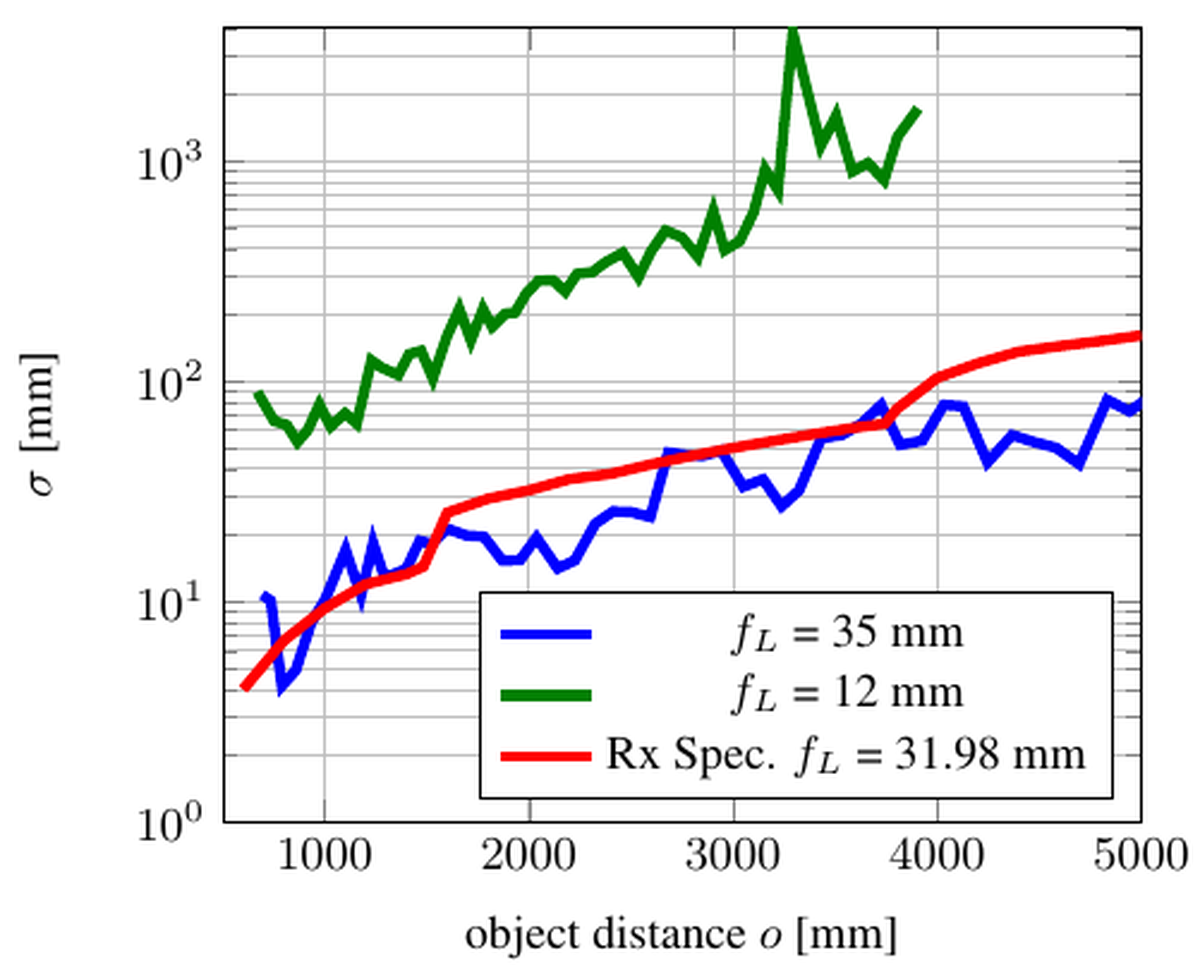

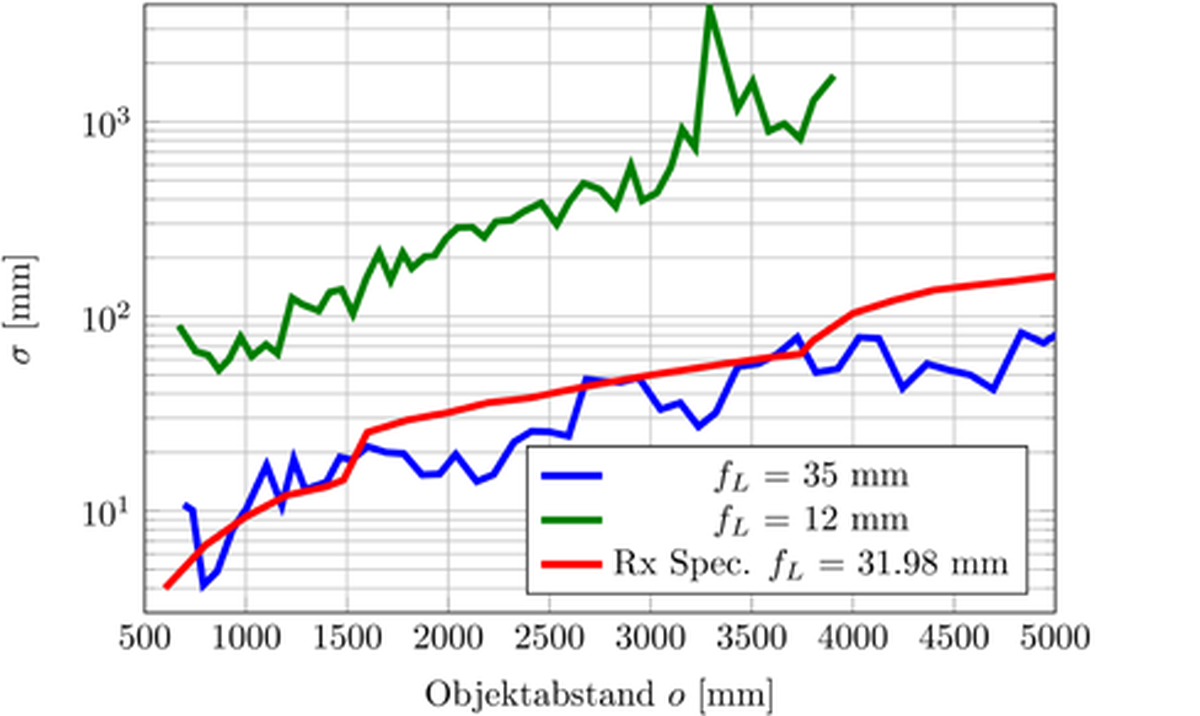

Investigating Mathematical Models for Focused Plenoptic Cameras

Niclas Zeller, Franz Quint, Marcel Sütterlin, Uwe Stilla

International Symposium on Electronics and Telecommunications (ISETC);

2016